Bracket Science: Surefire ways to measure a team's NCAA potential

It would be a mistake, however, to think that the dynamics of the 1985 tourney are the same as those of last year's dance. The tourney has evolved over the last 24 years, becoming lower scoring, more guard-dominant, and ruled by younger teams, to name just a few volatile characteristics. Here are the top 15 trends altering the mechanics of March Madness.

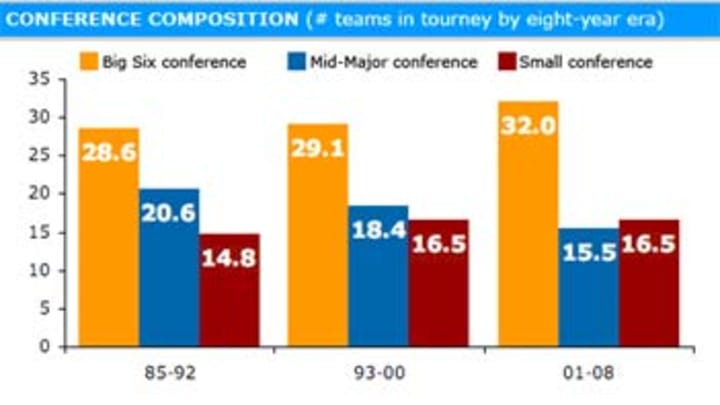

With all the talk of parity in college basketball, you'd think that the numbers would show an increase in the tourney participation of Mid-Major and Small conference schools. In fact, the opposite is true.

Before we analyze the impact of Mid-Majors and Small conferences, we need to clarify where the line gets drawn between the two. My rule for defining a Mid-Major is this: if a conference has had multiple bids in at least one year over the last decade, they're a Mid-Major. Otherwise, they're a Small conference. The last decade has had 11 Mid-Majors: the Atlantic 10, Big West, Colonial, Conference USA, Horizon, Mid-American, Missouri Valley, Mountain West, Sun Belt, West Coast and Western Athletic. But that doesn't mean they've always been Mid-Majors...or that they're the only Mid-Majors since 1985. Who can forget conferences like the Metro and the Great Midwest?

By this definition, the number of Mid-Majors getting tickets to the dance has actually decreased over the 24 years of the 64-team tourney era. Meanwhile the number of Small conferences has increased -- as has the number of Big Six teams. Take a look at these numbers:

If you divide the modern tournament into three eight-year periods, mid-majors have lost an average of five bids to the tourney from the 1985-1992 era to the current 2001-2008 era. Those five lost bids have been scarfed up mainly by the Big Six (an increase of 3.5 bids), but also by Small conferences (a 1.5-bid bump). Part of the increase of Big Six conferences can be attributed to the reclassifying of Conference USA teams like Louisville and Marquette. But there's no denying the numbers: Mid-Majors are not gaining more representation in the tourney; they're losing it.

It would be one thing if these fewer mid-major schools were actually outperforming seed expectations. But they aren't doing that either. In the early era of the modern tourney, mid-majors were slight overperformers, with a performance against seed expectations, or PASE, of +.010. In this most recent eight-year stretch, they're slight underachievers (-.008 PASE). That's at least an improvement over their performance in the middle period of the 24-year modern tourney era, from 1993-2000, when they had a -.058 PASE. Meanwhile, Small conferences have steadily improved over the three eight-year periods. They've always been underachievers, but their -.007 PASE since 2001 is closer to expectations than their -.033 PASE between 1985 and 1992. It's also a tad better than mid-majors. Of course, the Big Six has always been the best performer among conference types. Their most recent PASE is just +.007?down from their strong +.055 showing from 1993-2000. Still, it constitutes overachievement.

The bottom line: mid-majors are getting to the dance less often than in the past...and they continue to underperform. Small conferences are making small improvements in both representation and performance. And the Big Six conferences are dominating the field more than ever before -- while continuing to defy seed expectations.

Since 1985, the typical tournament team has been led by a coach with 5.6 years of March Madness experience. That's the overall average. If you looked at the three eight-year periods of the 24-year modern tourney era, you'd find that 1985-1992 fielded the least experienced coaches (5.3 dances under their belts), 1993-2000 featured the most experienced coaches (5.9 dances), and the most recent period falls in between those extremes (5.7 dances).

Oddly enough, the first eight years of the 64-team era saw the fewest number of first-year coaches in the dances. Only 21 percent of the coaches between 1985 and 1992 were tourney rookies. Meanwhile, 1993-2000, the era when coaching experience was at its highest, also saw the highest percentage of coaching newbies (25 percent). These figures were more than offset by the fact that 1993-2000 featured a higher number of veteran coaches with at least ten tourney trips (21 percent to 18 percent for the other two eras.

If you just averaged out the number of trips for non-first year coaches, you'd find that repeat coaches in 1985-1992 averaged 6.5 tourney bids, 1993-2000 coaches averaged 7.5 bids and the most recent era of coaches has averaged 7.0 bids. What I find interesting is that there appears to be an inverse correlation between tourney upsets and coaching experience. In 1985-1992, the era where there were less experienced repeat coaches (6.5 bids) and fewer "deer-in-headlights" rookie coaches (21 percent), upsets were at their highest (10 per tourney). Conversely, in 1993-2000, the period where there were more experienced repeat coaches (7.5 bids) and more rookie coaches (25 percent), upsets were at their mildest (7 per tourney. The most recent eight-year period has been in right in the middle: with repeat coaches averaging 7.0 bids and newbie coaches occupying 22 percent of the bracket slots, upsets have been exactly in the midpoint between seven and ten (8.5). Eerie. Maybe there's something to this correlation -- and maybe it's a coincidence.

One thing is for sure though: coaching experience has never had a stronger connection to overachievement than it has in these last eight years. If you look at the PASE values of coaches making their first trip, between two and five trips, or more than five trips to the dance, rookie coaches have always been underachievers. Since 2001, they're just slight underperformers (-.005 PASE)...but that's the best they've been over the three eight-year periods. On the other hand, coaches with more than five tourney trips have never been bigger overachievers. Their +.042 PASE between 2001 and 2008 marks a big comeback over their underperforming -.017 PASE between 1993 and 2000. What about coaches with two to five years of tourney experience? Lately, they're -.028 underachievers...but during the 1993-2000 era, they were solid +.068 overperformers.

Here's another way of looking at whether coaching experience helps in the tourney. Consider the average number of tourney trips for coaches whose teams: 1) make the tourney, 2) advance to the Sweet Sixteen, 3) reach the Final Four, and 4) win the championship. In all three eight-year periods, teams advancing through the bracket are led by successively more experienced coaches. Here's how the numbers graph out:

Not only are teams that go deeper in the tourney led by more experienced coaches, but the size of the experience gap has grown in each of the eight-year periods. Just compare the line for 2001-2008 with the other two eight-year periods: recent Sweet Sixteen survivors are just a little more experienced than their counterparts (8.3 trips to 8.1 for 1993-2000), while Final Four contenders are even more experienced (10.5 to 9.3). And champions? Over the last eight years, coaches boasting an average 14 tourney trips have cut down the nets. The number was just 11.1 between 1993-2000.

The bottom line: coaching experience has always helped confer a performance advantage in the tourney. And, recently, that advantage has gotten bigger.

As critical as a tourney-tested coach is to a deep bracket run, team experience is arguably more important. On the other hand, it's much less important than it was in the early years of the dance.

Between 1985 and 1992, the average tourney team came to the dance with 2.8 consecutive bids. Over the next eight years, team experience climbed to 3.0 straight trips. Finally, in the most recent period, from 2001 to 2008, teams are entering the dance with 3.4 straight invites to the tourney.

The one constant over the last 24 years is that teams that had not gone to the previous year's tournament are significant underachievers. In all three eight-year periods, rookie teams have negative PASE values. Here's a chart that shows the PASE values, for each eight-year period, of teams that: 1) didn't go to the previous dance, 2) are making between two and four straight trips, and 3) have bagged five or more consecutive bids.

You can see by the drooping gold bar that first-year squads aren't good bets to make overachieving runs. That's no surprise. What is surprising is how insignificant an extra couple years of tourney seasoning has been over the last 24 years. Check out the bars for teams with two to four straight trips. In the first eight years of the 64-team era, these squads underachieved at nearly the same rate as first-year teams (-.045 to -.051 PASE, respectively). Yes, they did overperform between 1993 and 2000, but not nearly as solidly as their more experienced counterparts. And since 2001? Teams with two to four straight bracket bids perform almost exactly to seed expectations.

Teams with five or more consecutive tourney appearances are the only consistent overachievers over the last 24 years of the modern era. Between 1985 and 1992, these veteran squads were nearly quarter-of-a-game per tourney overperformers (+.218 PASE). Since then, they've cooled off a little, but their +.090 PASE in the most recent eight-year era still represents strong overachievement.

How can just a couple years of consecutive bids make such a big difference in performance? Here's my theory: teams that earn two to four straight trips to the dance could be succeeding on the strength of one good recruiting class. On the other hand, teams with more than four straight bids aren't just riding on the success of one class; they've built a program that transcends individual contributions.

Offensive firepower has always been, and probably always will be, a key indicator of tourney overachievement. Seems simple enough: if you're proficient at putting the ball in the basket to an exceptional degree, you're more likely to go farther in the tourney.

Oddly enough, however, scoring in the tournament has been steady declining over the years. From 1985 to 1992, the average tourney team scored 78.6 points per game. In the middle period of the modern era (1993-2000), teams averaged a bucket lower (76.4 points per game). And since 2001, tourney squads are still another basket lower at 74.3 points a game.

Whether teams have become less efficient with the ball or more deliberate is the subject of another article (and a topic to which my friend Ken Pomeroy could more eloquently speak). But one thing is clear: the higher-scoring teams continue to outperform the lower-scoring squads. The proof is in the PASE. Teams averaging less than 75 points a game have been underachievers in all three eight-year periods of the modern era. Since 2001, they've fallen short of seed expectations at a -.056 PASE clip. Meanwhile, teams scoring between 75 and 80 points a game, after underperforming between 1985 and 1992, have become consistent overperformers. What about teams scoring more than 80 points a game? They've been overachievers in every period of the 64-team era, most recently weighing in with a +.059 PASE

It is true that there are fewer high-flying teams entering the dance than there used to be. In the first eight years of the modern era, 38 percent of the squads scored more than 80 points a game. That fell to 24 percent between 1993 and 2000. And since 2001, only 15 percent of the teams are as offensively potent. But get this: despite the dwindling proportion of prolific scoring squads, the number of Final Four teams exceeding 80 points a game has risen?from 12 percent in the first eight-year period, to 13 percent in the second and 15 percent in the most recent era. Take a look at how the scoring output of tourney advancers climbs the further they go in the tourney:

Two points are clear from this chart: first, there are obvious divisions in the offensive output between each eight-year period. Aside from the championship round, 1985-1992 was a consistently higher scoring era at each stage of the tourney than 1993-2000...which was consistently higher scoring than 2001-2008. The second point is that, as teams advance in the dance, they get progressively higher scoring -- and that hasn't changed even though teams are scoring less these days. Just look at the lines for the most recent periods; the slope is almost identical, albeit one basket apart.

The bottom line: efficient or not, high-scoring teams are high achievers in the tourney. Just look at last year's Cinderella darlings, Davidson and Western Kentucky. What did they have that most other low seeds didn't? Offensive firepower. And look where it got them.

Just as offensive output has steadily declined throughout the 24-year 64-team era, so too has defensive scoring. In the first eight years of the tourney, teams came to the dance yielding 70.1 points a game. That number has declined one basket in each of the succeeding eight-year runs. Since 2001, the average tourney team allows just 65.9 points a game.

Before last year, I would've told you that a team's defensive stinginess was not a key indicator of overperformance in the tourney. In fact, in 2007, I argued that teams allowing fewer points per game than average were more likely to be underachievers. That's because I was looking at the aggregate numbers going all the way back to 1985. In fact, if you evaluated teams allowing less than 65 points, between 65 and 70 points, and more than 70 points a game over the entire 24 years of the modern era, you'll find that the stingier teams tend to underperform (-.025 PASE).

But the aggregrate numbers on scoring defense are misleading. If you look at the three eight-year periods of the modern tourney, you'll find that the defensively tough squads have changed their performance significantly -- as have the more lax defensive squads. Take a look:

Between 1985 and 1992, teams yielding less than 65 points a game were -.121 PASE underperformers. In the middle years of the modern dance, they "improved" to just -.046 underachievers. Since 2001, however, they've transformed into solid overachievers, with a PASE of +.052. Meanwhile, the fortunes of defensively generous teams have gone in reserve. Just look at the performance difference for teams allowing more than 70 points a game between 1993-2000 and 2001-2008. In the earlier era, these squads were +.059 overachievers. More recently, they're -.121 underachievers.

So the gist is this: the value of scoring defense in foretelling tourney overachievement has swung dramatically over the course 64-team era. Where it once was a sign of underachievement it is now a solid indicator of overperformance.

If you could only know one thing about a team, aside from its seeding, scoring margin would be the factor to pick. Not coaching experience. Not conference affiliation. Not offensive firepower. The magic tourney performance indicator is the average number of points between what a team scores and what it allows per game.

How's this grab you? Twenty champions in a row have come to the dance with an average scoring margin of more than 10 points. More importantly for bracket pondering, since 1989, only ten teams per tourney have been seeded one through four with a margin greater than 10 points?and the champion has always been among them.

Interestingly enough, scoring margin has held steady in all three eight-year periods of the modern tourney era at 8.5 points. But its value as an indicator of tourney overachievement has steadily grown. (Maybe that's because, with offensive scoring going down, the relative worth of an 8.5-point margin increases, but I digress). Between 1985 and 1992, teams with a scoring margin above 10 points were +.044 PASE overperformers. That number grew to +.101 from 1993 to 2000. And since 2001, the overachievement value has swelled to +.134. Meanwhile, teams with a scoring margin less than six points per game are -.069 underachievers of late. For that matter, so are teams with an average scoring margin between six and ten (-.060).

If those numbers don't convince you of the power of scoring margin, try this line or reasoning on for size. With every extra scoring margin point that teams possess above eight, their PASE values have steadily risen during the 64-team tourney era. Since 1985, teams scoring over eight points a game more than opponents have a PASE of +.029. At more than 10 points a game, that value rises to +.093. It's nearly twice that at greater than 12 points a game (+.175). Add two more points to the scoring margin (greater than 14 points) and the PASE doubles to +.353. Finally, teams beating opponents by an average of more than 15 points a game sport a near-half-game-per-tourney overachievement rate of +.429.

As amazing as this progressive PASE increase is over the 24 years of the modern era, it's even more astounding when you divide the tourney into eight-year periods. Just look at the difference in these upward curves:

All three eight-year periods show a steady increase in overachievement for teams with progressively higher scoring margins. But the 2001-2008 period has a much steeper climb than the earlier years. At a scoring margin of more than 12 points a game, 2001-2008 teams are nearly quarter-game-per-tourney overachievers (+.223 PASE). With two additional scoring margin points, teams beat seed expectations by more than half a game per tourney (+.594). And teams with more than a 15-point average scoring margin since 2001 are whopping +.796 PASE overperformers.

I just can't get over it. The message is clear: before the brackets are even announced, scoring margin can help you safely narrow your list of potential deep advancers to a handful of teams. No other factor has that kind of predictive power?and it keeps getting stronger.

Way back in the late eighties, when I wasn't armed with any statistics, made all my bracket picks on ill-informed assumptions -- and actually won a pool or two, the winning percentage of a team held powerful sway over me. Faced with the choice of an eleventh-seeded 28-2 Mid-Majors against a sixth-seeded 19-11 Big Six team, I often picked by the better record. My reasoning was simple: teams that lose so infrequently know how to win -- and that confidence counts for something, no matter how strong their schedule is.

Of course, when I actually started evaluating the numbers in the early nineties, this "winning culture" theory was completely and utterly debunked. And since that time, I barely pay attention to winning percentage when I fill out my bracket. In fact, in the last couple of years, I haven't even bothered to write about winning percentage in this trends article, because I just assumed that conditions hadn't changed since my initial discoveries about the insignifance of inflated records.

Now I find that my assumptions about winning percentage are wrong again. Guess what? Over the last eight years, winning percentage has been a reasonably solid indicator of tourney overachievement. I looked at the PASE values of teams with winning rates of .700 or below, between .701 and .775, and above .775 for the three eight-year periods since the tourney expanded to 64 teams in 1985. If you crank the analysis through your handy-dandy Bracketmaster research tool, you'll find that there are stark differences between the three eras. Check out this graph:

No wonder I gave up on winning percentage in the mid-nineties. If anything, having an inflated record between 1985 and 2000 was more likely a sign of an underachieving paper tiger. That's especially true over the first eight years of the modern era, when teams with winning rates above .775 actually underachieved at a -.057 PASE rate. They got slightly better between 1993 and 2000, but were still -.024 unperformers. Meanwhile, teams struggling to reach a .700 winning rate saw the opposite fate over the first two eight-year eras. From 1985 to 1992, they were the top overachieving group (+.042), then saw their PASE settle into a less impressive seed-defying +.027 rate. Not surprising, teams with middling records held very close to seed expectations for the first 16 years of the modern era.

All of that has changed in the last eight years. Don't ask why, but suddenly winning percentage is a pretty darn good indicator of overachievement. Teams with records above .775 are +.066 overachievers. This includes such Cinderellas as twelfth-seeded Gonzaga in 2001, eleventh-seeded Southern Illinois in 2002, and 12 seeds Butler in 2003, Wisconsin-Milwaukee in 2005 and Western Kentucky last year, all of which reached the Sweet Sixteen. Then there's tenth-seeded Kent State in 2002 and Davidson last year, both Elite Eight contenders.

What's also surprising about the 2001-2008 numbers is that the winningest teams aren't necessarily building their overachievement at the expense of the losingest teams. It's true that teams with records of .700 or less have recently been slight underachievers (-.009 PASE). But it's really the team with the middling records between .701 and .775 that are failing most to live up to seed expectations (-.072 PASE).

The lesson for this year: I'm going to take a closer look at both high-seeded favorites and low-seeded longshots that have strong winning percentages. While their records might not be the key determinant in advancing them in my bracket, they might serve as a tiebreaker for those match-ups that I'm struggling to call.

As long as I've been listening to college hoops pundits, it's been a commonly held belief that pre-tourney momentum is a key indicator of whether teams will make deep runs or early exits. While most commonly held beliefs about the dance turn out to be myths, this one has some merit -- though probably not as much as you'd think.

There are really two ways to gauge momentum heading into the tournament: by evaluating the length of winning streaks or the number of wins in the final ten games. The winning-streak approach is complicated by the fact that many strong teams come to the dance with a single-loss "streak." That's because they get knocked out of their conference tournament. The only teams that really enter the dance with substantial winning streaks are those that win their conference tourney -- and the balance is more weighted toward Mid-Majors and Small conferences than Big Six teams.

One thing is clear about the value of a winning streak: if you're coming to the dance with more than a one-game losing streak, you're not a good bet to reach the Final Four or win the championship. Of the 88 teams that have entered the tourney with a legitimate losing streak -- almost all of whom are Big Six schools -- only three (Arizona in 1997, North Carolina in 2000 and UCLA in two years ago) made it to the Final Four. And Arizona is the only one of those to cut down the nets.

The more meaningful gauge of pre-tourney momentum is the number of wins in the final ten games. The average team enters the dance with 7.3 wins in their last ten pre-tourney match-ups. The number has held pretty constant over the three eight-year periods of the modern era. What hasn't held constant is the fortunes of teams with higher and lower "last-ten" win totals than the average.

We examined teams with fewer than seven wins, seven or eight wins, and nine or ten wins in their last ten pre-tourney games. Here's what the numbers show:

Teams with fewer than seven wins in their last ten games, are generally underachievers. Yes, from 1993-2000, they were slight overperformers (+.028 PASE). But since 2001, they've failed to live up to seed expectations at a more significant rate (-.067 PASE). Surprisingly, teams with seven or eight wins in their last ten games don't fare much better. (In fact, if you look at the 24-year numbers sub-seven teams have an overall PASE of -.015 while seven-eight teams disappoint at a nearly identical -.011 rate.) The difference between the two most "momentumly-challenged" groups is that seven-eight team have at least lived up to seed expectations since 2001, posting a +.004 PASE.

Without question, the most reliable overachieving momentum teams throughout the modern era are the ones winning nine or ten of their final ten games. These teams have a 24-year PASE of +.040?and have improved in each eight-year period since 1985. Most recently, they're sturdy +.071 PASE overachievers. Ironically, though, there hasn't been a tourney champion who won nine or ten of their final ten pre-tourney games since Maryland did the trick in 2002. And only two champs have run the table in their last ten games: Louisville in 1986 and UCLA in 1995. In fact, "ten-for-ten" teams, overall, are actually -.010 underachievers; it's the "nine-for-ten" teams that defy seed expectations the most: they're +.060 overachievers.

So what's the final word on pre-tourney momentum? I went pencil in a deep bracket run for any team coming to the tourney with a true losing streak. And I'd be leery of any squad with fewer than seven wins in their last ten games. As for the teams winning seven or more games, I might look a little more favorably on the hotter squads. But knowing that the last six champs have won seven or eight of their last ten match-ups, I wouldn't make any bracket decisions on the basis of momentum beyond the Elite Eight.

One of the tourney trends that has changed most dramatically over the last 24 years is the degree to which teams rely on guards for their scoring. The influence of the backcourt scoring has risen dramatically in each eight-year period of the modern dance. From 1985 to 1992, teams relied on guards for just 44 percent of their scoring. Over the next eight years, the backcourt contributed 48 percent of the tourney field's points. And since 2001, the average tourney teams gets 52 percent of their points from guards.

With such a meaningful movement toward more guard-dominant tourney fields, you'd think there would be a corresponding shift in the fortunes of these teams as they wend through the brackets. And that thinking would be pretty much wrong.

We looked at three classes of teams: those getting less than 45 percent, between 45 and 60 percent and more than 60 percent of their points from guards. Here are a few of the findings:

• In each eight-year period of the modern era, the most frontcourt-dominant teams have overachieved. It's true that they were bigger seed-defyers from 1985-1992 (+.076 PASE) than they are of late. But their +.006 PASE since 2001 is still the highest overachievement of the three scoring balance classes.

• The most balanced scoring teams (45-to-60 percent of points from guards) are consistent, albeit slight, underachievers. They fell dramatically short of seed expectations between 1985 and 1992 (-.103 PASE). But they've been just a hare under seed projection since then (-.005 PASE). Why the underperformance? My contention is that balanced teams can't exploit mismatches as easily...but that's just a theory.

• The most guard-dominant squads have never been overperformers in any eight-year period of the 64-team era. They have, however, improved to the point where they've met seed expectations since 2001. Before that, they were consistent -.020 underachievers.

The conclusion: while the tourney field is relying on guard scoring more than ever, that doesn't mean guard-dominant teams are performing better than frontcourt-reliant squads. In fact, if you look at the average percentage of points that tourney advancers have gotten from guards in each eight-year period of the modern era, you'll find that deeper advancers tend to be more frontcourt-oriented squads. Here's the proof:

A couple points are clear from this graph: 1) you can see that each succeeding eight-year era has featured increasingly guard-oriented advancers, but 2) the general trend in each era is that teams become more frontcourt-dominant the deeper they go in the tourney. That was particularly the case between 1985 and 1992. Final Four contenders were three percent less reliant on guards than the overall field. And the ultimate champions was another two percent less backcourt-dominant. Between 1993 and 2000, while the tourney field got 48 percent of their points from guards, semi-finalists got 44 percent. Since 2001, it is true that the tourney field has been just as guard-reliant as Final Four contenders (52 percent). But the ultimate champion gets eight percent more of its points from big men.

Here's how I've looked at scoring balance in recent years: it doesn't influence my bracket picks either way in the early rounds. I don't buy into conventional wisdom that you need a high-scoring guard for a deep tourney run. But I'm not swayed by the trend toward frontcourt-dominant advancers either. What I do pay attention to, however, is my choice of champions: all things being equal, I'll pick the team with better big men.

No one would deny that having a strong bench is critical to basketball success at every level. But does that mean it matters during March Madness? The short answer is no.

Throughout the course of the 64-team tourney era, the average percentage of points teams have gotten from their top five scorers is right around 80 percent. We evaluated the PASE of teams getting less than 75 percent, between 75 and 80 percent, and more than 80 percent of their scoring from their top five scorers. Here's what we found:

The teams that get bigger contributions from their bench were actually big underachievers (-.102 PASE) in the early period of the modern era. Then their fortunes reversed between 1993 and 2000 (+.108). Since 2001, deeper squads are the biggest overperformers of the three "scoring depth" groups...but +.020 isn't exactly emphatic seed defiance.

In fact, if you're looking for the group that's been the most consistent overachiever -- regardless of degree -- that would be the most balanced of the three groups. Teams getting between 75 and 80 percent of their points from starters have overperformed in every eight-period period since 1985...though the extent of that overperformance has diminished to just +.005 since 2001.

And what about teams that put the biggest burden on their starters? Well, they were actually overachievers in the early years of the modern tourney. But they've been underachievers since 1993. That said, they've only fallen short of seed expectations by a scant -.013 PASE in the last eight years.

That's too slim a margin to draw any major conclusions about the value of bench play. And when you consider that semi-finalists since 2001 are almost exactly as deep as the tourney field -- while champions are actually three percent more reliant on starters, slight differences in PASE values aren't good numbers to hang your bracket picks on.

Bottom line: while team scoring depth might be an important factor in getting to the tourney, it certainly isn't a key indicator of whether teams advance. If nothing else, it's good to know there are a few numbers out there that you don't need to worry about.

We already looked at team experience from the perspective of consecutive tourney bids. But there's another way to measure experience: how many years of college ball have your key players played? If you assigned one year to each college class -- one for freshman, two for sophomores, three for juniors and four for seniors -- you can measure the relative ages of the tourney teams' starting units.

Overall, we found that the average tourney team starts a group of juniors. That's an average class of 2.97 to be exact In the earliest eight-year period, starting units were slightly older than juniors (3.02) and since 2001, they've been younger (2.92).

That doesn't seem like much of a difference over the years. And it seems to debunk the popular notion that talented youth is being served of late. If you look at the PASE values of younger and older teams, the numbers also support the notion that youth does not confer a performance advantage. In each of the three eight-year periods of the 64-team era, teams with an average team age below 2.8 were slight underachievers. Teams with an average team age of 2.8 to 3.0 had mixed results -- underachieving between 1993 and 200, but otherwise overachieving. And teams averaging more than a junior starting unit (greater than 3.0) had the opposite fortune -- sandwiching two periods of underachievement around an eight-year run of defying seed expectations from 1993 to 2000.

All of that might sound like the verdict is mixed on whether a team's collective confers a performance advantage one way or the other. But if you examine the average age of teams advancing in the tourney, you'll see a different story. Here's how those numbers shake out:

For the first 16 years of the tourney, it is indeed true to say that team age didn't have a big impact on tourney performance. Just look at the trend lines for 1985-1992 and 1993-2000. In the earlier period, there isn't much difference between the tourney field, the Sweet Sixteens, the Final Four or the champion. They all hover around 3.0 -- a starting unit of juniors. In the middle tourney period, team age composition was slightly younger at every round of advancement than 1985-1992, but the trend line was also somewhat flat, with champions (3.10) being slightly older than previous rounds.

Since 2001, however, there has been a definite downward trend in the collective age of tourney advancers. With each two-round advancement in the tourney, teams get increasingly younger -- from 2.92 for the field, to 2.81 for Sweet Sixteen competitors, to 2.74 for the Final Four and 2.65 for champions. There's no denying it: over the last eight years, youth is definitely being served in the NCAA tourney.

The reason for the ascendancy of younger teams since 2001 isn't exactly a mystery. The rule requiring high schools phenoms to pay a visit to the college ranks before going pro has put more young, dominant players into the tourney. And even without that, we have been seeing fewer and fewer quality players remain through their senior year. That means that older senior-laden teams, while arguably more experienced, might also possess less raw talent.

The final verdict: I'm no longer persuaded that a team is equipped for a seed-defying tourney run just because they're stocked with seniors. On the other hand, I wouldn't advise advancing younger teams just because they're young either. The key is to examine the quality of those young squads. If they have players whose names are getting thrown around as first-round NBA draft candidates, then that probably means they've got star power. And that, more than age, should guide your impression of young teams.

It's been 15 years since the Strength of Schedule metric was first unveiled to measure the collective toughness of the opponents that college's elected to play. "SOS," as we'll call it, was devised as a counterbalance to raw win/loss performance of teams and the age-old argument: "It's not just how many you win, but who you beat."

The question for bracketeers is whether SOS has any inherent value in identifying tourney overachievement. In other words, regardless of records, do teams that subject themselves to tough competition fare better in the tourney than teams that cakewalk through a softer schedule?

The answer is somewhere in the middle. The average tourney team comes to the dance with an SOS of 92 -- but that's inflated by the low SOS's of Small conferences; the average SOS of teams seeded one through eight is about 40. We broke the SOS years into three five-year periods, from 1994 to 1998, 1999 to 2003, and 2004 to 2008. Then we examined teams with SOS's ranked 1 through 20, 21 through 50, and more than 50. Here's a picture of what we found:

The teams with the toughest schedules have been underachievers in two of the three five-year periods. And their -.039 PASE since 2004 is the worst of the three SOS groups. Interestingly, the teams with the easiest schedules have also predominantly underperformed. While they barely managed to beat expectations from 1994 to 1998 (+.009), they've been consistent underperformers since then. It's been the teams whose schedule fits between these two extremes that has fared the best. Not only have teams with SOS's ranked between 21 and 50 beaten seed projections in each five-year period, but the degree of their overachievement is increasing. Since 2004, it's been a hefty +.115 PASE.

One could certainly argue that a more balanced diet of competition avoids the pitfalls of both extremes. Teams with mildly tough schedules might not wear themselves down like teams facing a series of buzzsaws. Conservely, they might not be as untested as teams strolling through a lineup of cakewalks.

Here's where I wind up on SOS: I'm less likely to let a soft schedule influence whether I tab a team for under- or overachievement. But I may use an excessively tough schedule as a tiebreaker in determining how far I allow a higher seed to go. It's important to note that teams seeded one or two with SOS rankings of 1 through 20 are even bigger underachievers than the rest of the seeds. Last year, Tennessee and Duke both had hairy schedules?and both underperformed. When you sit down to fill out your bracket, it's at least worth asking, "Is this high-seeded squad that just ran the gauntlet of fierce competition too worn down for a long tourney run?"

In a perfect RPI world, the top 64 ranked RPI teams would make the tourney, the Selection Committee would seed them exactly according to their RPI values -- and the higher ranked RPI teams would always advance in the brackets.

Of course, we all know that the RPI is far from perfect. Thankfully, so does the Selection Committee. That's why they routinely seed some teams higher than where their RPI suggests they should be and other teams lower. Think about a team rated ninth in RPI. If it was seeded correctly according to RPI, it would be a No. 3 seed (since teams ranked 1-8 in RPI would take the top two seeds). If this team was "over-seeded," it would've been given a No. 1 or 2 seed. If it was under-seeded, the committee would've relegated it to a No. 4 seed or lower.

So is the Selection Committee making the right choice about which teams they elevate and demote? If the committee was accurate in its placements, right-seeded, over-seeded and under-seeded RPI teams would exactly meet seed expectations and their PASE values would be zero. Conversely, if RPI had any validity as a performance indicator, the teams that the committee elevated above what their RPI values warranted would underachieve against expectations (since they didn't deserve their loftier seed). Meanwhile, the teams the committee dropped below where their RPI would've placed them could be expected to overachieve. So what's happened in the three five-year eras since RPI came into being in 1994? Take a gander:

One thing's for sure: RPI is not a reliable gauge of tourney performance. Under-seeded teams relegated to lower seeds than they deserved based on RPI rankings actually underachieved in every era when RPI logic says they should've overachieved. Meanwhile, teams that were seeded above where they deserved based on RPI actually overachieved in two of the three eras. Their most substantial overperformance (+.078 PASE) has come since 2004. Overall, the best performing group has been the teams seeded exactly where RPI says they should be -- never mind that RPI logic would have them precisely meeting seed expectations.

As to whether the Selection Committee is making the right choices in seeding the teams whose RPI rankings they disagree with, the evidence is mixed. The absolute value of the over-, under- and right-seeded PASE numbers in each of the three RPI eras could be thought of as the deviation from a perfect reallocation of RPI ranking. In that light, the Selection Committee did their best work in the early years of the RPI, from 1994 to 1998 (.091). They went off the rails between 1993 and 2003 (.262), but have since improved their reallocation of teams (.153).

None of that changes my opinion of RPI: I don't pay any attention to it in making bracket selections. If I did, I'd use it as an inverse performance indicator. The fact is, the teams you'd think should overachieve based on RPI ranking actually perform the worst. And the teams that should underachieve are the best PASE performers.

Theoretically, every game in the NCAA tournament is supposed to be played on a neutral site to avoid home-court advantages. In reality, there have been 110 games since 1985 where one of the competitors was playing within 100 miles of their campus.

The question is: has that proximity to home resulted in a real advantage in the tourney? On balance, the answer is yes. The 110 "close-to-home" teams should've won about 114 games based on seed projections; they actually won 130 for a PASE of +.148.

Here's another key point to consider about a close-to-home-court advantage in the tournament: it's becoming stronger indicator of overperformance as time goes on. Take a look at the PASE numbers for the three eight-year periods of the 64-team era:

Since 2001, teams playing within 100 miles of campus -- like Davidson last year -- have overachieved as a +.202 PASE clip. That's nearly twice as strong an overperformance rate as the 1993-2000 period.

Here's where I come out on tourney teams effectively playing home games: they absolutely do overachieve, particularly in the first two rounds (+.151 PASE). After that point, however, who plays where doesn't matter as much (+.135). Besides, to the Selection Committee's credit, it's placing fewer teams within a short drive of their campus: between 1985 and 1992, 55 games involved close-to-home teams. Since 2001, there have only been 28 of these games.

All of my analysis into the 14 trends that have come before this rely on PASE -- you know, performance against seed expectations -- to quantify over- or underachievement in the tourney. PASE is calculated by comparing a team's actual wins at a particular seed against the average number of wins that seed has gotten over the 24 years of the modern era. Top seeds, for instance, average 3.42 wins per tourney.

That's a helpful number for comparison, but that doesn't mean top seeds have consistently averaged 3.42 wins through each era of the tourney. The fact is, since 2001, one seeds have averaged 3.53 wins per dance. Conversely, in the earliest period of the modern era, from 1985 to 1992, they only averaged 3.28.

So which seeds are on the rise -- and which are on the decline? We divided the 24 years of the tournament into three eight-year periods and here's what we found:

The seed position that has improved the most over the last eight years is the three seed, which is averaging half a game more wins per tourney (2.16) than it did in the first 16 years of the dance (1.66). Seven seeds have also made big strides since 2001, averaging 1.03 wins per tourney when they only won two-thirds of a game per dance between 1993 and 2000. In some respects, seven seeds are the new six seeds. That's because six seeds -- which had been a great seed to look for deep-advancing sleepers from 1985 to 2000, averaging 1.36 wins -- are only winning a single game per tourney.

Six seeds aren't the only big losers in recent years. Four seeds and eight seeds have also taken big falls. Between 1993 and 2000, four seeds won 1.75 games per dance. Now they're winning half a game less (1.25). Eight seeds have gone from winning about three-quarters of a game per tourney up through 2000 to half a game since.

Which seeds have performed the most consistently over the 24 years of the tourney? Two, five and nine seeds have seen only minor changes in their average win totals across each eight-year period.

Among the lower seeded squads, 12 seeds -- as has been well documented -- have been more prolific winners of late. And the biggest loser has been 14 seeds. In the early years of the tourney, they sprung upsets more often than 13 seeds. Now there performance is closer to that of 15 seeds.

So what does all this mean? Should we just throw out PASE values, since they're based on seed win averages that are always shifting? Well, considering that PASE gets recalculated after every tourney, that's not the answer. But I do think it's important not to let old biases about seeds calcify. For years, I been carrying with me the misapprehension that six seeds were the true sleepers in the tourney. It turns out that trend ended about eight years ago. I should've paid more attention to seven seeds. And this year, it might be worthwhile to treat three seeds as equal performers to two seeds.